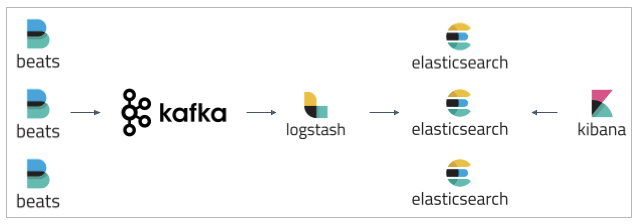

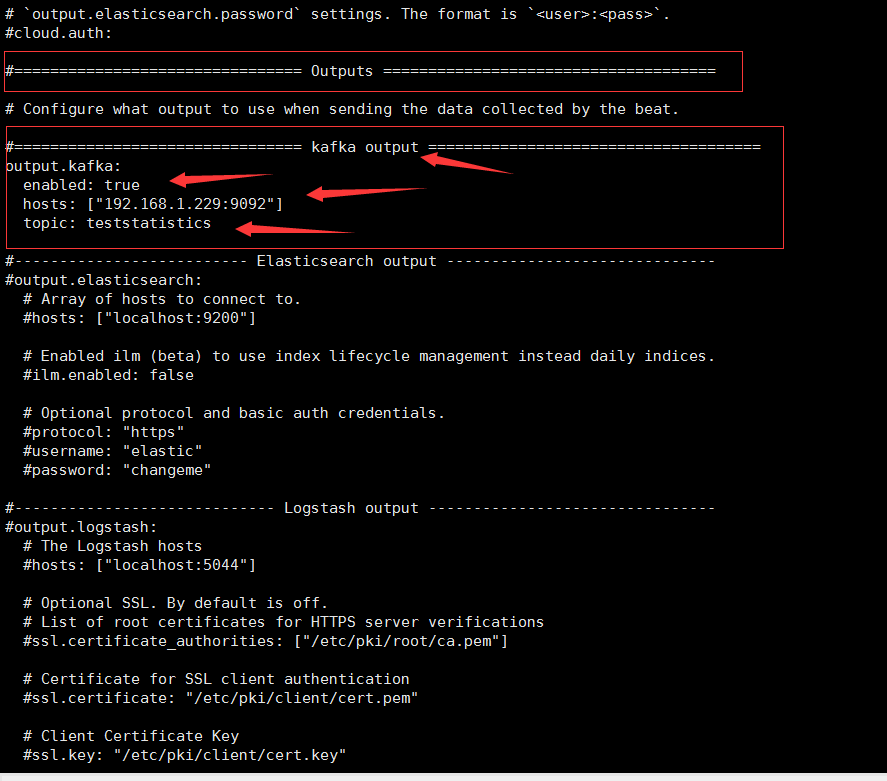

etc/logstash/conf.d/kafka/kafka_nf /etc/logstash/conf.d/logstash. Refresh the page, check Medium ’s site status, or find something interesting to read. =true-Dfile.encoding=0-XX:+HeapDumpOnOutOfMemoryError HI All I m faced with an issue where my filebeat instance is either not sending files to my Kafka instances or its not reding the spicified log file in my filebeat.yml config my config looks like this : output. Deploy Kafka with ELK Stack on AWS EC2 Instance (Part 1) by headintheclouds Feb, 2023 Dev Genius Write Sign up Sign In 500 Apologies, but something went wrong on our end. XX:CMSInitiatingOccupancyFraction=75-XX:+UseCMSInitiatingOccupancyOnly Pipeline.workers: : 1000path.logs: /var/log/logstash Logstash 配置文件 /etc/logstash/logstash.yml pipeline: Given Kafka is in the mix, is there a reason that filebeats appears to have slowed down and then played caught up This sounds like backpressure, but after talking to the solution architects, they think that kafka should have cached the data and filebeats should have worked steadily. If limit is reached, log file will be automatically rotated rotateeverybytes: 10485760 10MB Number of rotated log files to keep. Logstash pipelines文件 /etc/logstash/pipelines.yml - pipeline.id: another_test queue.type: persisted name: filebeats.log Configure log file size limit. kubectl exec into pod and test modules enabled or not. Delete readiness probe from daemonset than pods and containers run. 安装 kafka插件 /usr/share/logstash/bin/logstash-plugin install logstash-output-kafka T11:30:03.448+0530 INFO registrar/registrar.go:134 Loading registrar data from D:DevelopmentAvectofilebeat-6.6.2-windows-x8664dataregistry T11:30:03.448+0530 INFO registrar/registrar.go:141 States Loaded from registrar: 10 T11:30:03.448+0530 WARN beater/filebeat. Just install 6.8.11-SNAPSHOT with output Kafka configuration. WARN Connection to node 1 (node2/192.168.88.101:9092) could not be established. INFO Retrying leaderEpoch request for partition test_10m-2 as the leader reported an error: UNKNOWN_SERVER_ERROR () This section shows how to set up Filebeat modules to work with Logstash when you are using Kafka in between Filebeat and Logstash in your publishing.

output configuration 3, Configuration file 1. But It needs more information about Kafka and Kafka Streams API.

If I need to talk about the second one, of course it's more professional approach than I explained above. It will give you some information about timing. And then, look for the last data timestamp in the topic. Java.io.IOException: Connection to node2:9092 (id: 1 rack: null) failed.Īt .NetworkClientUtils.awaitReady(NetworkClientUtils.java:71)Īt (ReplicaFetcherBlockingSend.scala:102)Īt (ReplicaFetcherThread.scala:310)Īt (AbstractFetcherThread.scala:208)Īt (AbstractFetcherThread.scala:173)Īt (AbstractFetcherThread.scala:113)Īt (ShutdownableThread.scala:96) Our game servers are configured to emit their log messages to log files, where we send them to our log aggregator/visualizer, in this case logz.io. Use FileBeat to collect Kafka logs to Elasticsearch 1, Demand analysis 2, Configure filebeans 1. The only Kafka ecosystem feature I'm aware that can help you do something like that is Kstreams (but you have to know how to develop using Kstreams API) or using another Confluent piece of software called KSQL that allows to do SQL Stream Processing on top of Kafka Topics which is more oriented to Analytics (i. So first, you can send data with the timestamp to the topic. WARN Error when sending leader epoch request for Map(test_10m-2 -> (currentLeaderEpoch=Optional, leaderEpoch=158)) ()

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed